Migrating an On-Premises Database to the Cloud

The future is based on cloud technology. As data requirements increase, so does the need for more robust and scalable options to house this data. While data traditionally may be housed on-premises, data storage in the cloud is far more easily scalable, backups are more streamlined, and there is a far lower chance of data loss, and as such, more and more businesses are realizing the value of promoting their on-premises databases to the Cloud. In the following, I present a novel example of doing so through migrating an on-premises Oracle database to an Azure Database for PostgreSQL cloud instance.

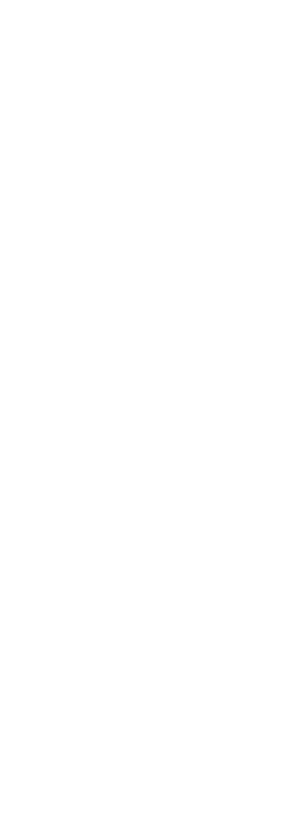

The database in question was associated with an ASP.NET retail application containing products, shippers, and customers. The customer was interested in such a migration for several reasons:

- Simplified licensing: Azure Database for PostgreSQL is licensed in terms of resources consumed, and many extensions are free.

- Powerful features: Azure Database for PostgreSQL integrates well with cloud services; it can scale and implement high availability; and it offers enhanced JSON storage which was a customer requirement.

With this in mind, I will give a brief overview of the migration process and the key takeaways from this process in the hopes that this will not only show you a real-world example of the Cloud migration process, but also how to configure such a database.

Migration Procedure

The process began by creating the Oracle database on an Azure Windows Virtual Machine (VM). To do this, I reproduced the objects (tables, indexes, views, packages, etc.) and inserted data into the tables. I considered the issues that must be addressed in a production database migration which often can be overlooked.

- It is crucial to check for invalid objects in an Oracle database. As a result, I included a query that lists all invalid database objects, allowing the development team to repair and recompile those objects.

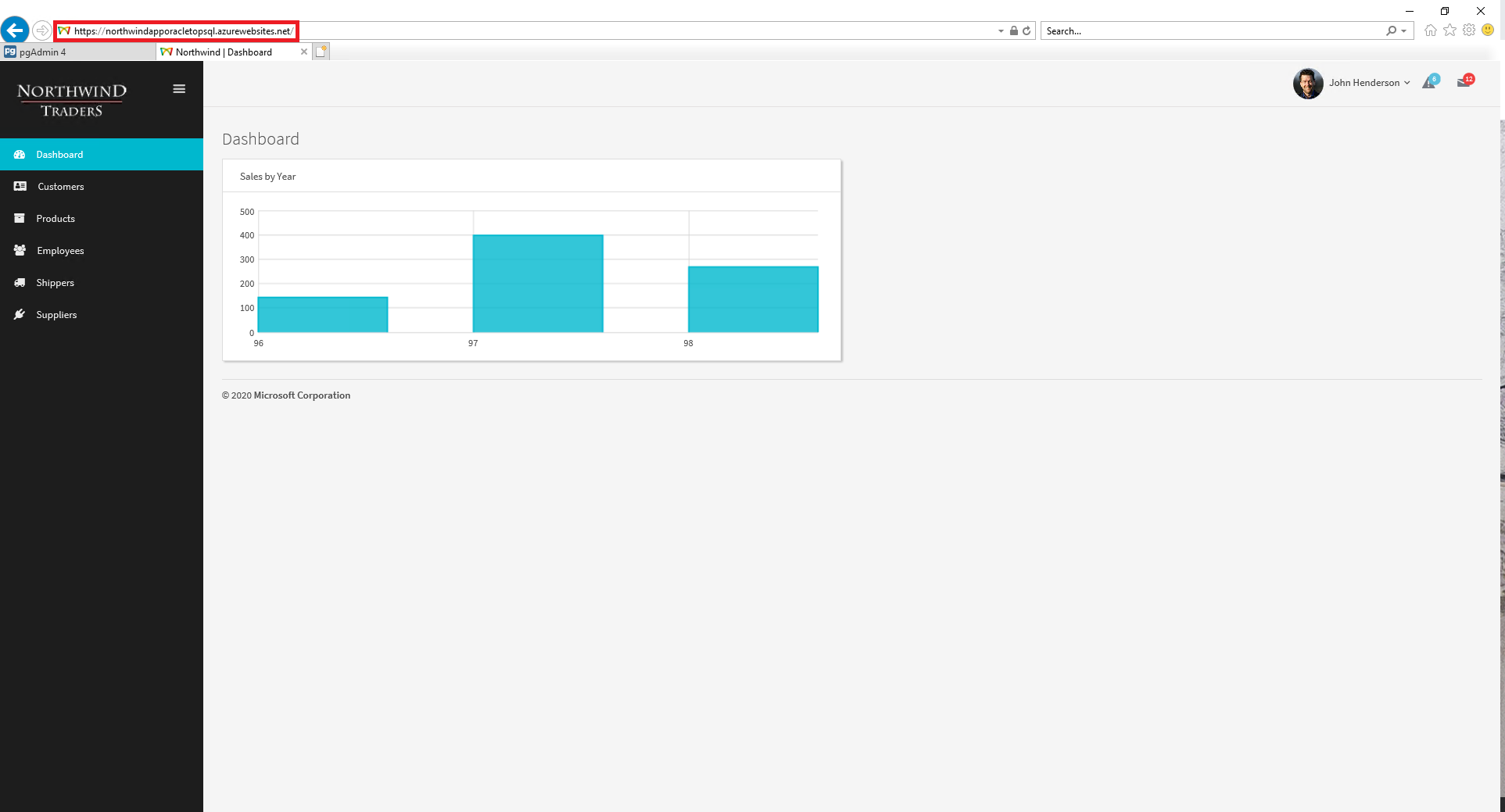

- Some objects, such as stored procedures, may require additional effort to migrate. Using ora2pg, a migration utility, I created a report which outlines the objects that will require additional effort to migrate, as seen in the following. This allows you to measure the human effort to complete the migration.

In addition, I created the Azure Database for PostgreSQL target database, configured the application user in the database, and configured an Azure App Service instance for ASP.NET 4.7. App service offers excellent benefits for cloud deployments, including support for different technologies, simplified deployment, and cloud compute scalability.

At this point, the resources were ready for the migration. I installed and configured ora2pg on the VM. Moreover, to handle data export, I described the configuration of Microsoft Azure DMS.

Then, I completed the migration in the following order:

- Created table structure in the target database using ora2pg

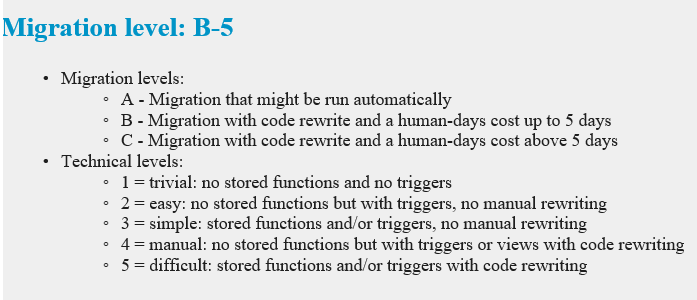

- Exported data from source to target using Database Migration Services (DMS) as seen in the following.

Mapping Oracle Source Tables to PostgreSQL Target Tables (DMS)

Mapping Oracle Source Tables to PostgreSQL Target Tables (DMS) - Exported constraints, foreign keys, and indexes to the target (ora2pg)

- Altered and exported views (ora2pg)

- Altered and exported stored procedures (ora2pg)

After completion of these steps, I was ready to execute the ASP.NET application against the new PostgreSQL cloud database. I used a third-party connector with ADO.NET support (Devart dotConnect), so I would not need to make significant changes to the application (just new Entity Data Models). I did modify the code that invoked a stored procedure, and after testing, I believed that I was very close to deploying the entire solution to Azure. However, one final struggle remained as I tried to migrate the ASP.NET app to Azure App Service.

Troubleshooting

After deploying the application to Azure App Service from Visual Studio, I tried navigating to the app. Yet every time, a 500 error with little explanation appeared in my browser window.

I appreciate the value of lists, so I began my troubleshooting process by listing potential variables that I knew were not the source of the problem:

- The connection string: the application worked on the VM flawlessly with Azure Database for PostgreSQL

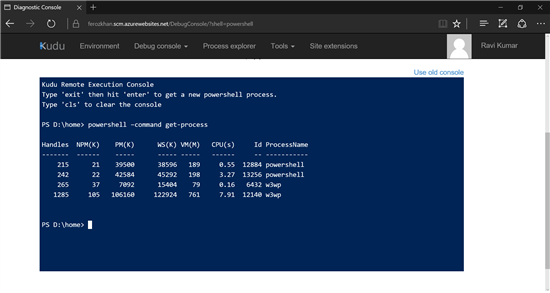

- Visual Studio and App Service Deployment: upon navigating to the Kudu console, I verified that assets were delivered to the App Service instance. These assets also refer to the DLLs that are usually stored in the GAC of the development machine . The following is the Kudu Console on Windows App Service from a different project.

Source: Microsoft TechNet

Source: Microsoft TechNet - Database Access Security: I gave the application explicit access to the PostgreSQL database, so I knew the source of my error wasn’t to be found here.

Since the problem had originated after the application was deployed to the cloud, I decided to employ Azure services to trace the problem:

- The Kudu console is provided with all Azure App Service instances. Instantly, I could verify that all assets were deployed and that IIS was serving my application.

- Azure App Service provides the opportunity to view application and server logs via the following command, yet I did not see any information that pointed to the issue. Later, I did later learn that messages invoked through the System.Diagnostics.Trace class would appear in the application logs.

az webapp log tail --name appname --resource-group myResourceGroup

Curiously, the solution was a fairly simple one: there was a licensing issue with the PostgreSQL ADO.NET framework. I simply had to enable debugging in the solution Web.config file, as seen below in the code snippet. At this point, I redeployed the solution, and voilà!

<system.web>

...

<customErrors mode="Off"/>

</system.web>

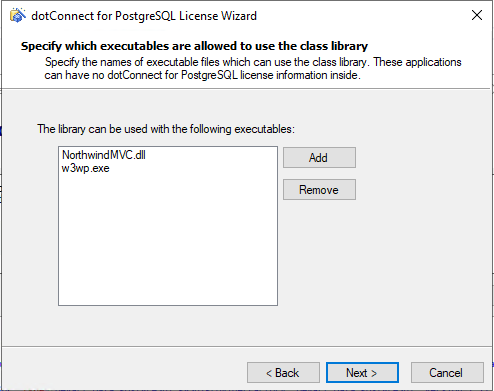

I was then able to provide the necessary files and to register the framework with the IIS worker processes and the built solution, as seen in the following.

Conclusion

Thus completes a short overview of one example of a database migration. This process is a nuanced, complex process; however, by taking extra steps such as creating a migration report, making and testing code modifications, and following a methodical troubleshooting process, I have shown how this process can be done with minimum churn. It is important to note that slightly different steps may need to be taken depending on the nature of the migration. For example, in large and data-sensitive cases, an ExpressRoute connection may be needed. Nonetheless, the fundamental procedures remain the same, with details like the migration path dictated by business leaders and the migration team (e.g. whether storage-intensive tables can be refactored).

The first step starts with you

Get in touch!

The bigger the challenge, the more excited we get. Don’t be shy, throw it at us. We live to build solutions that work for you now and into the future. Get to know more about who we are, what we do, and the resources on our site. Drop a line and let’s connect.